April 07, 2026

Healthcare

AI Copilots in Healthcare: Minimizing Diagnostic Risks With Smart Virtual Assistants

Key Takeaways

AI copilots are designed to address exactly this — tools like Microsoft Copilot for healthcare are entering clinical workflows to help catch what humans miss — not by replacing doctors, but by acting as a second set of eyes.

The causes are well documented: clinicians face heavy caseloads, EHR documentation burdens, and alert fatigue from systems that generate too many warnings. Research from the National Library of Medicine puts diagnostic error rates between 10–15% across most clinical settings, driven by cognitive overload, poor communication between care teams, and siloed data. More training alone cannot solve these structural problems — clinicians need real-time support inside the tools they already use, which is where AI copilots come in.

The core functions include: Source: LinkedIn

Source: LinkedIn

On the clinical side, Microsoft Dragon Copilot (formerly DAX Copilot) is the company’s ambient AI documentation tool for clinicians. It captures the patient–clinician conversation in real time, converts it into a structured clinical note, and integrates directly into EHR systems like Epic. According to a Microsoft survey of 879 clinicians, DAX Copilot saves an average of five minutes per encounter, with 70% of clinicians reporting reduced burnout and fatigue — across more than 600 healthcare organizations, it has assisted over 3 million patient conversations in a single month.

Still, Microsoft Copilot for healthcare is not without limitations. LLM-generated content carries hallucination risk, regulatory status is still evolving, and integration complexity varies by existing infrastructure. Source: KPMG

Still, organizations face significant operational challenges in implementation. The most common bottlenecks include:

Source: KPMG

Still, organizations face significant operational challenges in implementation. The most common bottlenecks include:

But if your organization has specialty workflows, proprietary data sets, or complex payer integration requirements, a custom AI solution may be the better fit. Custom development gives you full control over the model, the data pipeline, and the user experience, and lets you build for specific regulatory requirements from the ground up rather than working around the limitations of a general-purpose tool.

A hybrid approach often works best—using a commercial platform for common workflows and building custom components where differentiation matters. The choice should be driven by clinical and operational needs, not vendor hype. If you are exploring what healthcare software development looks like for AI-powered tools, the first step is understanding where your biggest gaps are.

Kanda built a generative AI platform that works across the entire encounter. The system handles real-time triage and symptom assessment, automatically transcribes the patient–clinician conversation, generates structured summaries with AI-driven diagnosis and treatment suggestions for the doctor, and assigns CPT billing codes in real time so the physician knows immediately what insurance covers. It is not technically an AI copilot in the Microsoft sense, but the functional overlap is significant: ambient listening, automated documentation, clinical decision support, and workflow automation all running inside a single integrated system. You can read more about the 4-step workflow Kanda built for this project here.

We can help with:

But deploying them responsibly takes work. Hallucination risk, regulatory uncertainty, training data bias, and integration complexity are real challenges. The healthcare systems that get the most value from AI copilots approach adoption with a clear plan, invest in the right compliance foundations, and partner with teams they trust to understand both the technology and the clinical context.

- AI copilots act as always-on virtual assistants managing documentation, triage, and decision support so clinicians can focus on patients.

- With 12 million diagnostic errors and up to 80,000 deaths annually, AI copilots are built to catch what overloaded clinicians miss, not replace them.

- Dragon Copilot saves clinicians around 5 minutes per encounter with patients and has reduced burnout for 70% of surveyed clinicians.

- Successful AI deployments tend to share the same pattern: phased rollouts, EHR-native integration, and continuous monitoring.

- Build vs. buy depends on your workflows. Off-the-shelf covers common ground; custom handles specialty needs and proprietary data.

AI copilots are designed to address exactly this — tools like Microsoft Copilot for healthcare are entering clinical workflows to help catch what humans miss — not by replacing doctors, but by acting as a second set of eyes.

The Diagnostic Error Problem AI Copilots Are Built to Solve

Diagnostic errors are the most common, costly, and dangerous type of medical mistake. About 5% of outpatient encounters involve a diagnostic error, and roughly half of those could lead to serious harm – around 6 million people a year are at risk of real consequences from a missed or delayed diagnosis.The causes are well documented: clinicians face heavy caseloads, EHR documentation burdens, and alert fatigue from systems that generate too many warnings. Research from the National Library of Medicine puts diagnostic error rates between 10–15% across most clinical settings, driven by cognitive overload, poor communication between care teams, and siloed data. More training alone cannot solve these structural problems — clinicians need real-time support inside the tools they already use, which is where AI copilots come in.

What an AI Copilot in Healthcare Actually Does

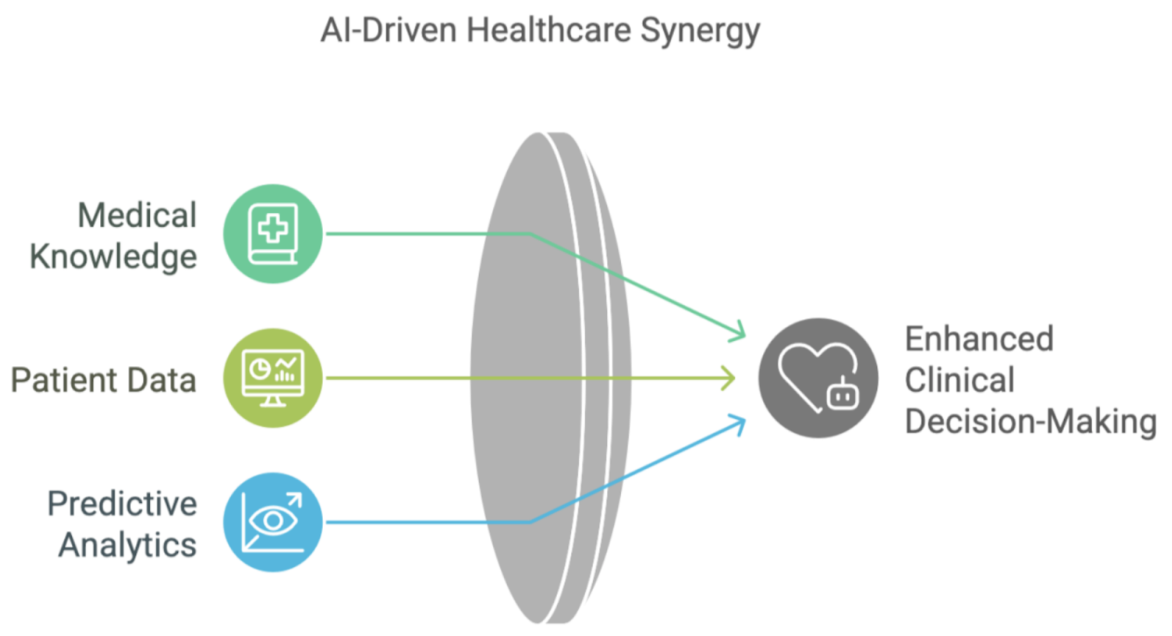

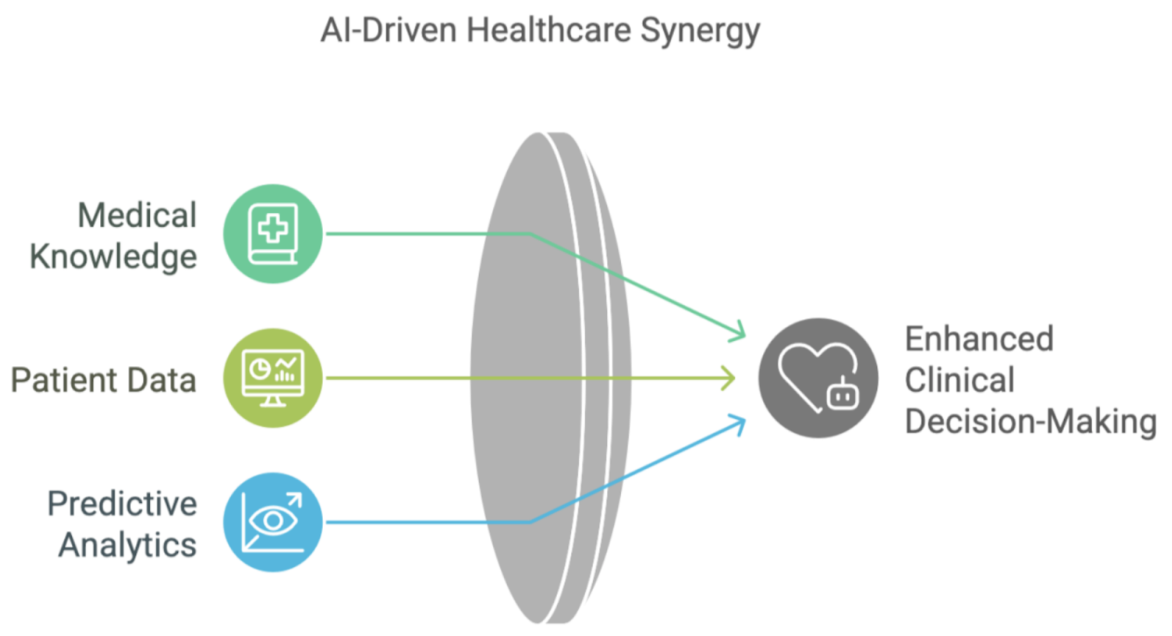

An AI copilot is neither a chatbot nor a standalone diagnostic algorithm — it is a decision-adjacent assistant embedded in the clinical workflow, sitting inside the EHR, listening to the patient encounter, and surfacing relevant information in real time.The core functions include:

- Real-time clinical decision support

- Automated documentation

- Symptom triage and risk stratification

- Drug interaction alerts

- Evidence retrieval at the point of care

AI Copilots vs. Traditional Clinical Decision Support Systems

Traditional CDSS tools are rule-based and reactive. They fire alerts when a predefined condition is met—like a drug interaction flag—but they cannot interpret unstructured clinical notes or synthesize information across different data sources. AI copilots powered by large language models can. They read the full context of a conversation, identify patterns across disparate records, and deliver recommendations tailored to what is happening in the exam room. The result is fewer irrelevant alerts and more clinically meaningful prompts at the right time. Source: LinkedIn

Source: LinkedIn

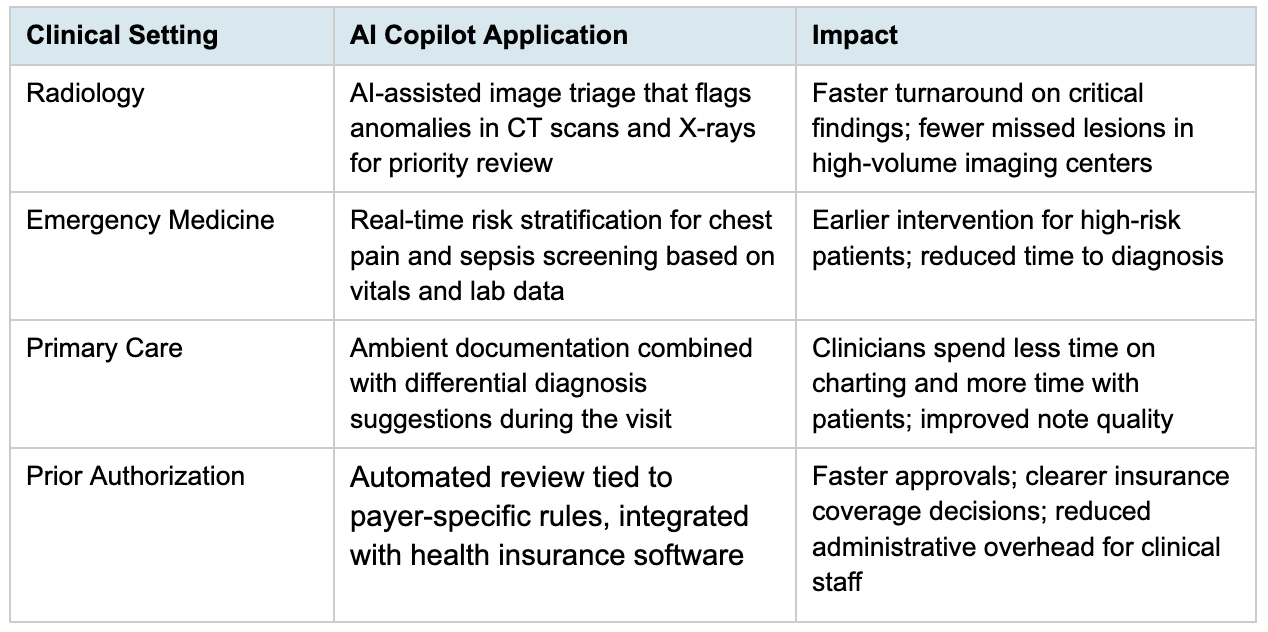

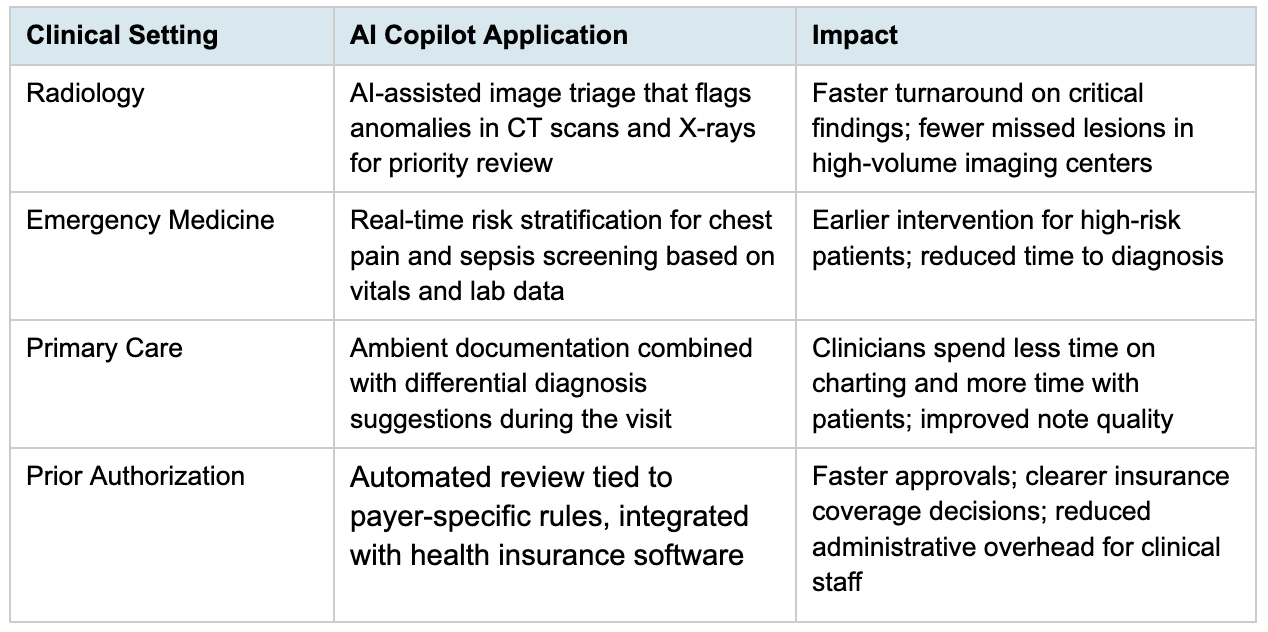

AI Copilots Across Specific Clinical Settings

AI copilots are not theoretical. They are already being used in several clinical contexts, each with its own specific value proposition.

Microsoft Copilot for Healthcare and What It Offers Clinicians

Microsoft has been making aggressive moves in the healthcare AI space. In March 2026, the company launched Copilot Health, a dedicated feature within its Copilot assistant that aggregates personal health data from wearables, electronic health records, and lab results. As Fortune reported, the tool connects to over 50,000 U.S. hospitals and provider organizations, handles more than 50 million consumer health questions per day, and pulls verified content from credible sources like Harvard Health. Microsoft describes it as a step toward “medical superintelligence.”On the clinical side, Microsoft Dragon Copilot (formerly DAX Copilot) is the company’s ambient AI documentation tool for clinicians. It captures the patient–clinician conversation in real time, converts it into a structured clinical note, and integrates directly into EHR systems like Epic. According to a Microsoft survey of 879 clinicians, DAX Copilot saves an average of five minutes per encounter, with 70% of clinicians reporting reduced burnout and fatigue — across more than 600 healthcare organizations, it has assisted over 3 million patient conversations in a single month.

Still, Microsoft Copilot for healthcare is not without limitations. LLM-generated content carries hallucination risk, regulatory status is still evolving, and integration complexity varies by existing infrastructure.

Where Microsoft Copilot Fits Into an Existing Health IT Stack

For IT buyers, the integration question matters. Dragon Copilot runs on the Microsoft Azure ecosystem and supports HL7 FHIR APIs for interoperability. Its native integration with Epic is a significant advantage for health systems already running that EHR. The broader Microsoft Cloud for Healthcare platform includes the Azure Health Bot framework, enabling organizations to build custom AI agents for patient engagement, triage, and administrative workflows.Risks That Come With AI Copilots in Clinical Settings

Despite their clear benefits for both clinicians and patients, AI copilots are not risk-free. Any honest assessment has to account for the places where they fall short:- LLM Hallucination. LLMs can produce confident but incorrect output — in a clinical setting, that's a wrong dosage or a missed contraindication. Mitigation: Every AI-generated recommendation needs human review, with clinician sign-off built into the workflow.

- Alert Fatigue. A poorly configured copilot can reproduce the same problem as legacy CDSS systems: too many alerts, not enough signal. Mitigation: set thresholds carefully during the pilot, prioritize high-severity notifications, and let clinicians adjust sensitivity for their specialty.

- Training Data Bias. If the training data underrepresents certain demographics, model recommendations will too. Mitigation: audit datasets for representation, run regular bias testing across patient subgroups, and retrain as more diverse data becomes available.

- Over-Reliance. Clinicians who lean too heavily on AI support may gradually lose diagnostic sharpness. Mitigation: position the copilot as a second opinion, not a first answer, and maintain training programs that reinforce independent clinical reasoning.

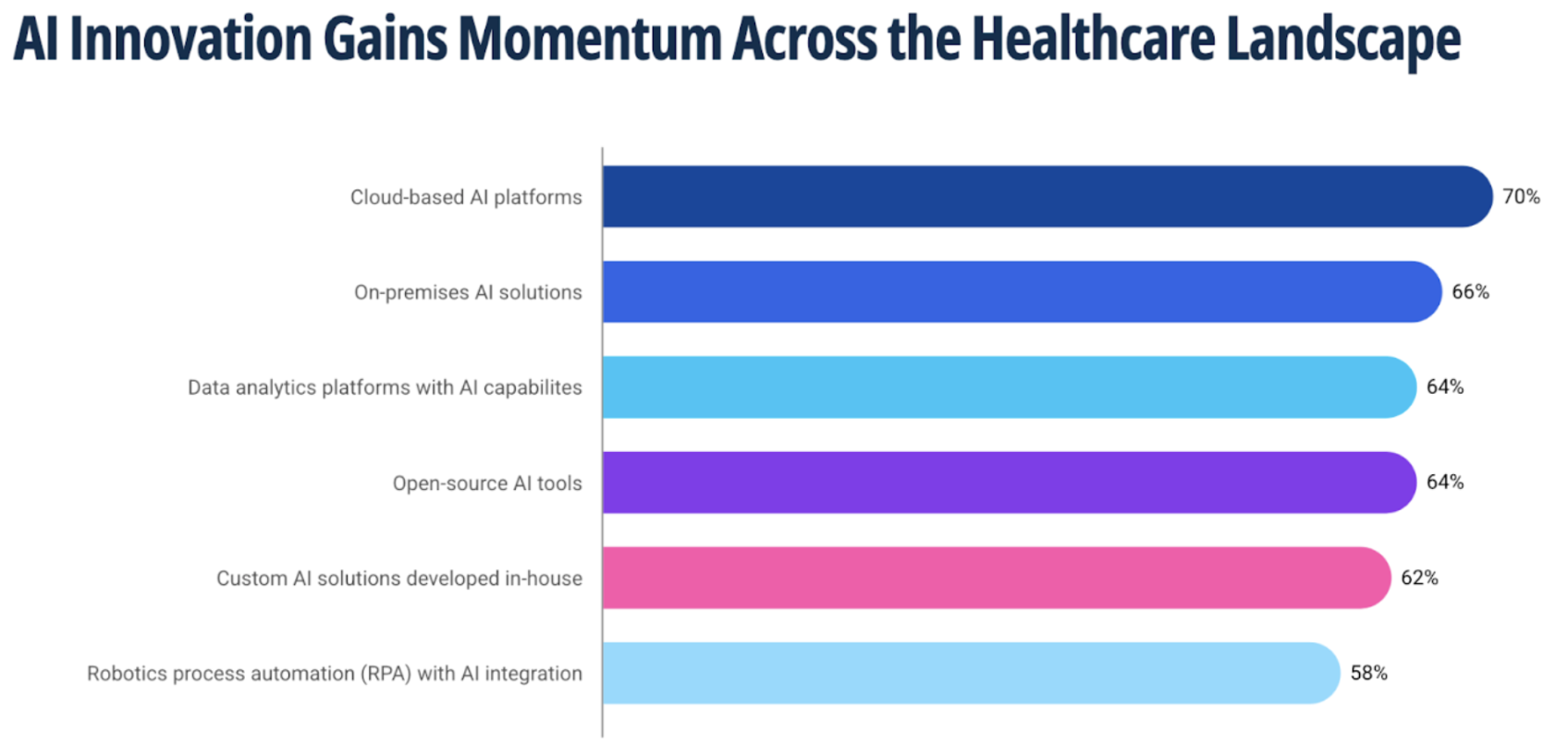

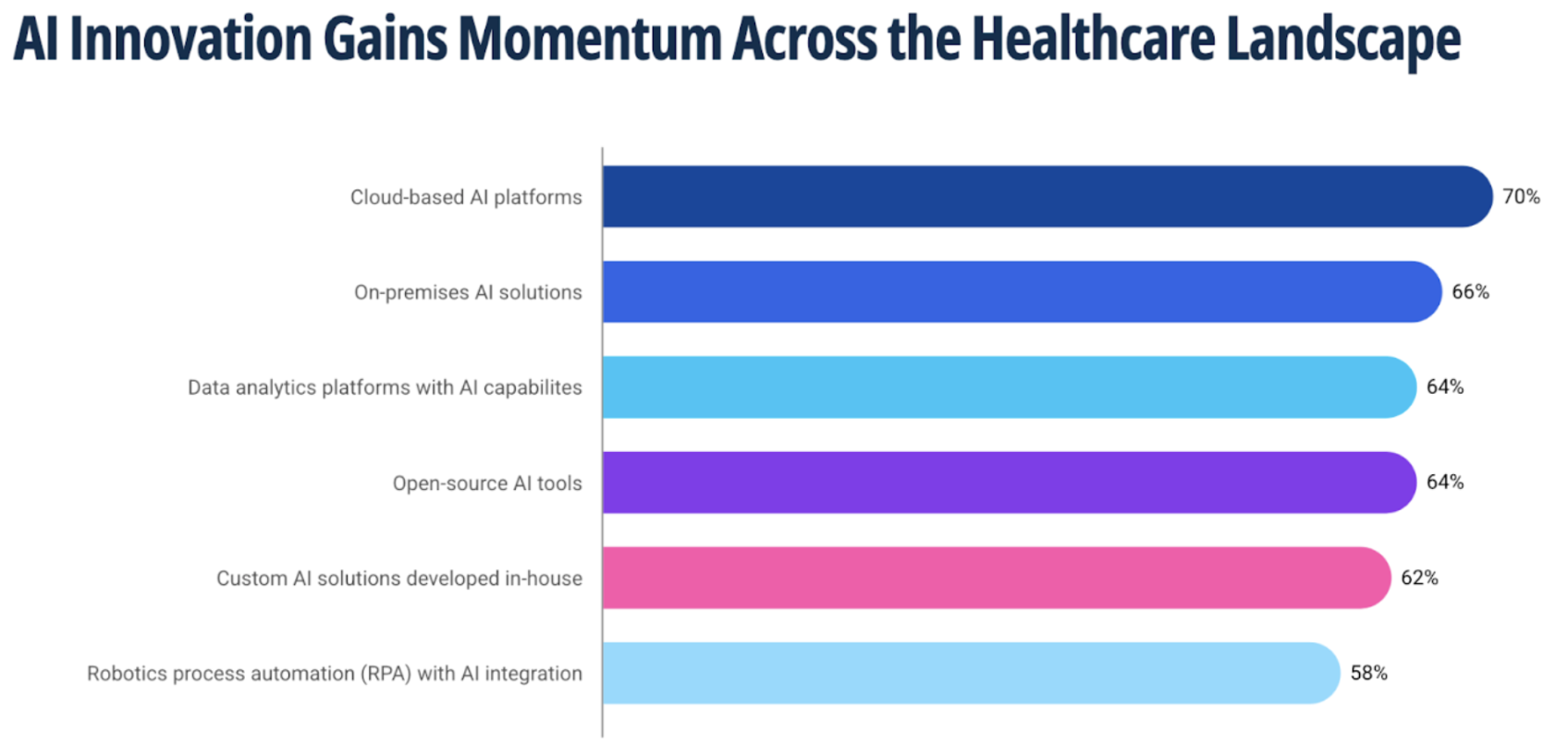

AI Adoption in Healthcare: Where U.S. Health Systems Stand Today

AI adoption in healthcare is accelerating, driven by growing demand for smarter clinical tools, but not uniformly. KPMG’s 2025 Intelligent Healthcare report found that 62% of healthcare organizations are developing AI solutions in-house, with 65% of U.S. healthcare companies saying AI is already having a measurable impact on operations. Generative AI, speech recognition, and agentic AI are the most common applications. Source: KPMG

Still, organizations face significant operational challenges in implementation. The most common bottlenecks include:

Source: KPMG

Still, organizations face significant operational challenges in implementation. The most common bottlenecks include:

- Data Quality. AI output is only as good as the data behind it. Most healthcare organizations are still dealing with inconsistent records and legacy systems that don't share information well.

- Skills Gaps. Deploying AI effectively requires clinical and technical expertise most teams don't have in-house. Without the right people, even well-built systems underdeliver.

- Compliance Uncertainty. HIPAA and CMS guidelines set a high bar for any AI tool handling patient data. A compliance architecture needs to be built in from day one, not added later.

- Clinician Trust. Skepticism is common when AI recommendations come without clear reasoning. Earning buy-in takes transparency and solid validation data — not a top-down mandate.

What Responsible Implementation Looks Like in Practice

Getting the technology right is only half the challenge. Successful organizations put as much thought into how they roll out AI copilots as into what they buy or build. A few patterns show up consistently in successful deployments:- Phased Rollout with Pilot Cohorts. Start small—pick a specific department or specialty, measure results, and expand from there. Deploying an entire health system on day one is a recipe for failure.

- EHR-Native Integration. Standalone AI tools that live outside the clinical workflow get ignored. The copilot needs to be embedded directly into the EHR to be useful.

- Human-in-the-Loop Review. Every AI-generated recommendation should be reviewed by a clinician before it is acted on. No exceptions.

- Continuous Monitoring and Retraining. AI models drift over time. Performance needs to be tracked, and the model needs to be updated as new data comes in.

- Staff Training and Change Management. Clinicians need to be equipped with a clear understanding of what the tool does, what it does not do, and how to use it effectively. Without this, adoption stalls. Organizations planning for AI deployment should also build predictive modeling into their broader analytics strategy to maximize the value of AI across clinical and operational workflows.

Building vs. Buying the Right Healthcare AI Copilot

For healthcare systems evaluating their options, the build-versus-buy question matters. Off-the-shelf platforms like Microsoft Dragon Copilot work well for common use cases—ambient documentation, clinical decision support, and patient engagement. If your workflows align with what the platform offers out of the box, that is usually the faster and more cost-effective route.But if your organization has specialty workflows, proprietary data sets, or complex payer integration requirements, a custom AI solution may be the better fit. Custom development gives you full control over the model, the data pipeline, and the user experience, and lets you build for specific regulatory requirements from the ground up rather than working around the limitations of a general-purpose tool.

A hybrid approach often works best—using a commercial platform for common workflows and building custom components where differentiation matters. The choice should be driven by clinical and operational needs, not vendor hype. If you are exploring what healthcare software development looks like for AI-powered tools, the first step is understanding where your biggest gaps are.

What This Looks Like in Practice: AI-Powered Urgent Care

To see what custom AI development looks like in an urgent care setting, consider a project Kanda built for a comprehensive emergent and urgent care center founded by two ER doctors. The organization needed a system to handle the full clinical workflow—from the moment a patient walks in to the final billing step—without the hours of manual data entry. On average, their medical staff was spending two hours on documentation for every hour of patient interaction.Kanda built a generative AI platform that works across the entire encounter. The system handles real-time triage and symptom assessment, automatically transcribes the patient–clinician conversation, generates structured summaries with AI-driven diagnosis and treatment suggestions for the doctor, and assigns CPT billing codes in real time so the physician knows immediately what insurance covers. It is not technically an AI copilot in the Microsoft sense, but the functional overlap is significant: ambient listening, automated documentation, clinical decision support, and workflow automation all running inside a single integrated system. You can read more about the 4-step workflow Kanda built for this project here.

How Kanda Can Help

Building or integrating an AI copilot into a clinical environment requires deep experience in healthcare workflows, data governance, and regulatory compliance. Kanda has been working in healthcare technology for decades, helping organizations turn AI from a proof of concept into a production-ready tool.We can help with:

- Evaluating whether an AI copilot fits your clinical or operational goals.

- Designing and building custom AI assistants tailored to specialty workflows and proprietary data.

- Integrating AI tools into your existing EHR and health IT infrastructure using HL7 FHIR and standard interoperability protocols.

- Building compliance frameworks that address HIPAA, FDA SaMD requirements, and CMS guidelines from day one.

- Developing ongoing monitoring and retraining protocols to keep AI performance reliable over time.

Conclusion

AI copilots in healthcare have moved past the pilot stage. They are reducing documentation burden, flagging diagnostic risks, and supporting better decisions at the point of care.The combination of ambient AI, large language models, and deep EHR integration is making these tools genuinely useful for overstretched clinicians.But deploying them responsibly takes work. Hallucination risk, regulatory uncertainty, training data bias, and integration complexity are real challenges. The healthcare systems that get the most value from AI copilots approach adoption with a clear plan, invest in the right compliance foundations, and partner with teams they trust to understand both the technology and the clinical context.

Related Articles

CMS Prior Authorization Rule 2026: What Health Systems and Payers Actually Need to Build by October 2027

Key Takeaways The 2026 CMS Prior Authorization (CMS-0062-P) rule extends electronic prior authorization requirements to drugs for the first time, closing a major gap left by the 2024 rule. The hard compliance deadline is October 1, 2027 - by that date, systems must be live, not in development or testing. Compliance requires three major technical…Learn More

Unlocking Actionable Insights from Medical Data with Clinical NLP

Key Takeaways Clinical NLP extracts useful details from notes, reports, and other free-text records so teams can work with them. The clearest use cases include coding support, trial matching, safety monitoring, registry work, and population health. The output is only useful if it connects to the standards healthcare teams already use: SNOMED CT, RxNorm, LOINC,…Learn More

Why Omics and Clinical Data Integration Remain a Challenge and How to Fix It

Key Takeaways Omics and clinical data often remain separate, making them harder to use together than they should be. The main challenge is usually not the data itself, but the mismatched formats, siloed systems, and disconnected teams surrounding it Addressing that requires robust infrastructure, shared standards, and workflows that hold up without constant manual intervention.…Learn More

Change Management in Healthcare Software: The Missing Piece of Digital Transformation

Key Takeaways Nearly two-thirds of healthcare change projects fail, and the root cause is almost always people and process, not technology. Clinician resistance is a rational response to workflow disruption, not a personality problem. Plan for it. Change management is a parallel workstream that runs alongside software development from day one, not a training session…Learn More